Title

Create new category

Edit page index title

Edit category

Edit link

ML Anomaly Detections

MetaDefender NDR's machine-learning family is the only detection engine that does not know in advance what malicious traffic looks like. It learns the shape of this network's Domain Name System, Hypertext Transfer Protocol, and flow telemetry as it streams past, then raises an alert on an event whose shape is isolated from everything the engine has already seen. The family catches novel, unsigned, undeclared activity — the opposite of the signature, intelligence-feed, and multi-antivirus families — and runs continuously without labeled training data, human supervision, or a separate training phase. This chapter explains how the Random Cut Forest (RCF) engine scores events, what it raises, and how operators tune it.

First-use acronym expansions in this chapter: ML (machine learning), RCF (Random Cut Forest), RRCF (Robust Random Cut Forest — the online-learning variant used in MetaDefender NDR), DNS (Domain Name System), HTTP (Hypertext Transfer Protocol), TLS (Transport Layer Security), SNI (Server Name Indication), JA3 and JA3S (TLS client and server fingerprint hashes), NXDOMAIN (Non-Existent Domain DNS response), TTL (Time-to-Live), TCP (Transmission Control Protocol), SYN / ACK / FIN / PSH / RST (TCP control flags), IP (Internet Protocol), IOC (Indicator of Compromise), C2 (command-and-control), DGA (Domain Generation Algorithm), FIFO (first-in-first-out eviction), CIDR (Classless Inter-Domain Routing), UI (user interface), FRD (Functional Requirements Document), MVP (Minimum Viable Product), PostMVP (features deferred beyond MVP).

What It Is

The ML anomaly family is an unsupervised, streaming-first anomaly detector built on the Robust Random Cut Forest algorithm. The service maintains one independent RCF detector per event type — one for DNS, one for HTTP, one for Flow — and scores every merged event against the detector that matches its Suricata event type. Each detector is a forest of Random Cut Trees that partition a feature space with random cuts; events that settle into sparsely-populated regions of the forest receive a high anomaly score, events that cluster with many others receive a low score. The detector learns continuously: every event it scores also updates the forest, so the model's sense of "normal" tracks the network as it evolves day over day.

Operators never train the model offline. There is no labeled dataset, no training phase, no scheduled retraining. The detector warms up inside the first few hundred events it sees — scores on the first events after a cold start are discarded — and improves its separation as live traffic streams in. When a detector scores an event above the configured per-type threshold, the service emits an ML anomaly alert carrying the score, the threshold it crossed, the model version, and the original event so analysts can pivot straight into the triggering record without leaving the alert.

The family's value is complementary to every other detection engine: Suricata signatures match on known packet content, the C2 and InSights families match on curated threat intelligence, MetaDefender Core scans extracted files, and the behavioral family detects specific named traffic shapes (beaconing, exfiltration, tunneling). The ML family catches the long tail — events whose shape simply does not fit what has been seen before in this network, regardless of whether any rule or feed recognizes them.

What It Detects

The family surfaces deviations from learned baselines rather than named threats. Typical findings fall into four loose categories.

- Novel command-and-control infrastructure. Beacon check-ins, non-standard TLS handshakes, and unfamiliar DNS patterns originating from a compromised host that no signature or IOC feed has yet catalogued. The RCF detector does not need to know the destination is malicious — it only needs the event to look isolated relative to the network's learned norm.

- DNS misuse and DGA activity. Query-rate bursts, high NXDOMAIN ratios, anomalous query-type distributions, unusual TTL variability, unusually long or deeply-subdomained names, and n-gram patterns consistent with Domain Generation Algorithms. The DNS detector combines query and answer counts, the event's hour, and upstream feature context to isolate DNS shapes the network rarely produces.

- TLS client or server profile anomalies. Unfamiliar JA3 or JA3S fingerprints, deprecated cipher preferences, short-validity or self-signed certificates, unusual extension orders or Server Name Indication patterns. TLS features are baselined alongside DNS and flow characteristics and contribute to the per-event score when a flow's TLS handshake is atypical.

- Flow-level rarity. Byte and packet asymmetries, uncommon port or protocol combinations, and temporal patterns that the detector's sliding window has not seen before. Flow anomalies correlate strongly with exfiltration staging, lateral-movement probes, and tooling traffic from non-standard clients.

The family does not, by itself, explain why an event is anomalous. It highlights which events are worth a closer look; the analyst uses the Hunt page's per-protocol sidebar sections to read the underlying DNS, HTTP, TLS, or flow record and decide whether the anomaly is malicious, benign-but-unusual (a newly-deployed service starting up), or a model warmup artifact.

How It Works

Each RCF detector runs a small, fixed pipeline per event.

- Feature extraction. The detector converts the incoming merged event into a 15-dimensional numeric feature vector, normalized to the unit interval so that features of disparate scale (bytes, hours, boolean flags) contribute evenly. The flow block contributes bytes-to-server, bytes-to-client, packets-to-server, packets-to-client, flow age, a boolean for whether the flow has already alerted, and the flow's start hour and minute. The TCP block contributes five boolean flags (SYN, ACK, FIN, PSH, RST). The HTTP block contributes status code and response length. The DNS block contributes query count and answer count. A temporal feature carries the hour of the event timestamp.

- Shingling. The detector concatenates the last three feature vectors into a sliding window (the shingle), producing a 45-dimensional point that encodes not just the current event but recent context. This is what lets the model catch temporal patterns — sequence anomalies rather than single-event anomalies.

- Scoring. The shingle is inserted into the forest of Random Cut Trees and scored on its co-displacement — a measure of how much the tree structure changes when the point is removed. Events that settle into densely-populated regions score low (the forest barely changes when they leave); events that settle in sparse regions score high (their removal visibly simplifies the forest). The score is a non-negative floating-point value.

- Whitelist adjustment. Before the score is compared to the threshold, an infrastructure-aware whitelist may reduce it. The whitelist ships with patterns for MetaDefender NDR's own platform traffic, Kafka, PostgreSQL, service-discovery and pod-network IPs, multicast, broadcast, and a short allowlist of common internal endpoints. Matching events either have their score multiplied by a fractional reduction factor (0.4 to 0.9) or are excluded entirely. Whitelist action, reduction factor, and the pre-adjustment score are preserved on the alert payload so analysts can see what the raw score was.

- Threshold comparison and alert emission. The adjusted score is compared to the per-event-type threshold: 3.0 for DNS, 8.0 for HTTP, 20.0 for Flow. Events above the threshold are emitted as ML anomaly alerts on the streaming ML alerts stream; events at or below are dropped.

- Online model update. Regardless of whether the event alerted, the forest evicts its oldest shingle and inserts the current one, keeping each detector's memory bounded (128 points per tree by default, across 20 trees per detector). This is what makes the family online — the forest continuously re-learns what normal looks like and adapts to gradual network change.

The alert engine picks up the ML alerts stream, runs its pass-through MLAnomalyDetection rule, stamps the alert at the unified Medium severity, and publishes it to the Hunt page and Dashboard alongside every other alert. On the Hunt page, the alert row carries the anomaly score and threshold as columns; the sidebar renders the original event verbatim so the analyst can read the DNS, HTTP, or flow record that triggered the score.

Three process details are worth keeping in mind while reading the rest of the chapter. First, the detector's memory is bounded — older patterns fall out of the forest as new ones arrive, which means a rare traffic type that suddenly becomes common (a newly-deployed service, a network migration) will score high while the forest is learning it and low once it settles. Second, the forest is per-event-type — DNS rarity does not influence HTTP scores. Third, the family does not assign its own severity on MVP; the alert engine's pass-through rule stamps every ML anomaly at Medium, and the usual IOC auto-escalation rule takes over from there.

Trigger Conditions

An ML anomaly alert fires when a detector's post-whitelist score exceeds its per-event-type threshold. The fields below are what analysts read when triaging the alert.

| Field | Meaning |

|---|---|

ml_rcf_anomaly.anomaly_score | The per-event anomaly score after whitelist adjustment. Floating-point, non-negative. A score of 3.5 on a DNS event is barely above threshold; a score of 20 on the same event is extreme. |

ml_rcf_anomaly.threshold | The per-event-type threshold the score crossed (DNS 3.0, HTTP 8.0, Flow 20.0 by default). Present on every alert so analysts see the boundary without memorizing it. |

ml_rcf_anomaly.suricata_event_type | The original Suricata event type that was scored — dns, http, flow, tls, alert, and so on. Tells the analyst which detector fired and which sidebar sections to read. |

ml_rcf_anomaly.original_score | Present only when the whitelist reduced the score. Shows the raw score before reduction so analysts can see how aggressively the whitelist is smoothing traffic. |

ml_rcf_anomaly.whitelist_action | Present only when the whitelist matched. Typically reduced (score multiplied by a factor) or excluded (event would have been dropped; never appears on an emitted alert since excluded events do not alert). |

ml_rcf_anomaly.whitelist_factor | Present only when the whitelist reduced the score. A value between 0.4 and 0.9 — the factor the original score was multiplied by. |

ml_rcf_anomaly.model_version | Identifier of the RCF model that produced the score. Lets analysts group alerts by model era when the configuration changes. |

ml_rcf_anomaly.version_model | Semantic version of the model (for example, 0.1.0). |

ml_rcf_anomaly.detector_config | Identifier for the detector configuration — num_trees, shingle_size, tree_size, and per-event-type threshold bundled into one logical snapshot. |

ml_rcf_anomaly.event_id | The identifier of the original Suricata event that scored anomalous. Use this to pivot to the full event in the Hunt page. |

ml_rcf_anomaly.timestamp | Timestamp of the original anomalous event (not the scoring time). |

ml_rcf_anomaly.original_event | The full merged event payload — DNS, HTTP, TLS, flow, or alert block — that was scored. Rendered in the sidebar via the standard per-protocol sections when present. |

A single merged event produces exactly one ML anomaly alert when its score crosses the threshold; there is no per-entity reduction like MetaDefender Core's positive-engine maximum. When multiple events in quick succession all score high (a host burst of anomalous DNS queries, for example), each event raises its own alert — analysts working the queue see the cluster and pivot back to the shared source IP.

Severity Classification

On MVP, the ML family does not assign its own native severity. The alert engine's pass-through MLAnomalyDetection rule sets every emitted alert at Medium regardless of the score value. Severity rises only when the IOC auto-escalation rule applies.

| Trigger | Unified Serverity |

|---|---|

| Event crosses the per-event-type score threshold (DNS ≥ 3.0, HTTP ≥ 8.0, Flow ≥ 20.0) and no IOC intersection applies. | Medium |

| The anomalous event's source, destination, or queried domain coincides with a C2 feed hit or an InSights Threat Intelligence Database or Reputation Database match. | Critical (IOC auto-escalation) |

This conservative choice is deliberate. The raw anomaly score is a good ranking signal but a weak absolute-magnitude signal — a score of 3.5 on DNS is near the threshold, while a score of 20 on DNS is extreme, but comparable scores on HTTP or Flow mean different things because the thresholds differ. Rather than project the score onto a severity ladder that would need per-event-type calibration, MVP stamps every ML anomaly at Medium and relies on the unified IOC auto-escalation and on the analyst's queue ordering (see Confidence Scoring below) to drive priority.

The IOC auto-escalation rule described in Detection Overviewpromotes an ML anomaly alert to Critical / 0.99 confidence when any of the event's entities coincide with a C2 or InSights match. An anomalous DNS query to a known DGA domain on the C2 feed becomes a Critical alert; an anomalous HTTP flow to a REPDB-listed host becomes a Critical alert. The matched indicator is visible in the companion C2 Enrichment or Insights Enrichment sidebar section so analysts can see which feed drove the escalation.

Confidence Scoring

Confidence on ML anomaly alerts maps the adjusted score onto the unified confidence scale from Detection Overview so analysts can order work inside the Medium severity band without comparing raw scores across event types. The bands below reflect general interpretive guidance — a score of 3.0 on DNS, 8.0 on HTTP, and 20.0 on Flow all sit at the floor of the low-confidence band because they barely crossed their respective thresholds.

| Trigger | Alert Confidence |

|---|---|

| IOC auto-escalation fires for this alert. | 0.99 |

| Score is at least three times the per-event-type threshold — an extreme anomaly the forest considers isolated by an order of magnitude. | 0.90 |

| Score is between two and three times the per-event-type threshold — strongly anomalous. | 0.80 |

| Score is between 1.3 and two times the per-event-type threshold — moderately anomalous. | 0.65 |

| Score is between 1.0 and 1.3 times the per-event-type threshold — at the minimum, likely deserves context before acting. | 0.50 |

The 0.99 band is reserved for IOC auto-escalation, consistent with every other detection family. The 0.50 band is where most alerts land in a well-tuned deployment; once the RCF detectors have warmed up and the whitelist has learned the environment's infrastructure, the tail of extreme-score alerts thins out and most day-to-day alerts cluster near their threshold. Analysts ordering the queue inside the Medium severity band work the 0.90 to 0.80 alerts first and use the 0.65 to 0.50 alerts as corroborating context rather than as standalone priorities.

Where It Surfaces

ML anomaly alerts appear in four places.

- Dashboard — Recent Severities donut. Every ML anomaly alert contributes to the Medium slice on MVP (or the Critical slice when IOC-auto-escalated). Top Signature Hits is Suricata-only and does not include ML alerts.

- Dashboard — Recent Alerts feed. Newest ML anomaly alerts flow through the cross-family feed, distinguishable by the ML RCF Anomaly Event type label. Clicking a row opens the same sidebar used on the Hunt page.

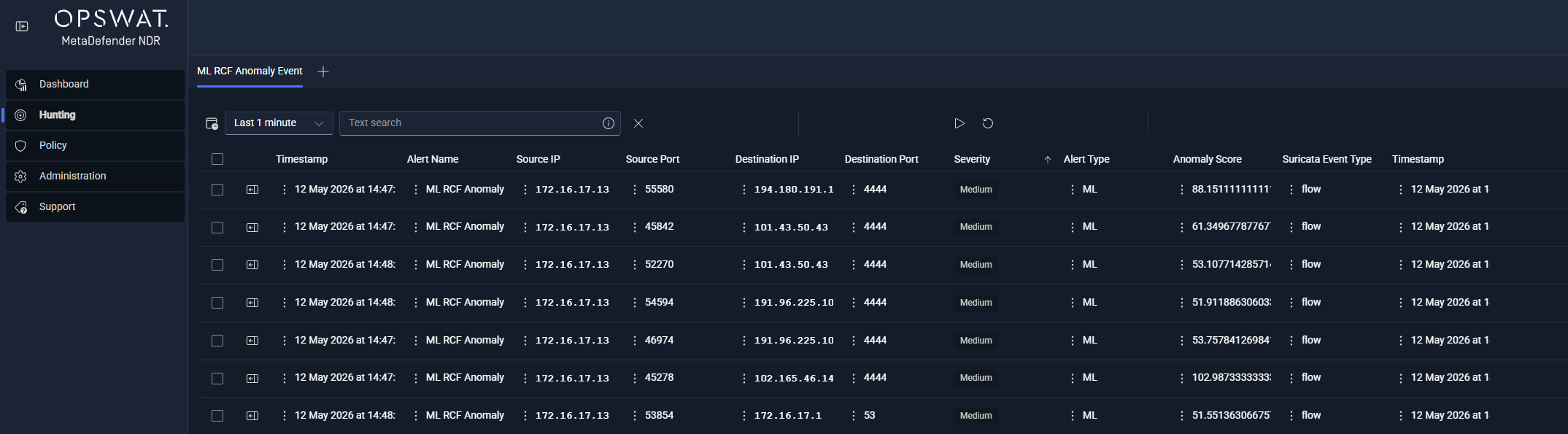

- Hunt page — ML RCF Anomaly Event sub-tab. Under the All Alerts bucket, this per-type sub-tab lists every ML anomaly alert with the base alert columns (minus

proto, which is derived from the original event rather than the alert), plus the RCF-specific columns —anomaly_score,threshold,suricata_event_type,model_version, and the whitelist columns when present. Analysts sort byanomaly_scoredescending to surface the extreme outliers. - Hunt detail sidebar — standard network section plus any companion protocol section. MVP does not wire a dedicated ML Anomaly sidebar section. Instead, the sidebar renders the Network Base block from the original event's five-tuple, and any of the per-protocol sections (Suricata DNS, Suricata HTTP, Suricata TLS, Suricata Flow, or Suricata FileInfo) that correspond to the anomalous event's type. The RCF fields (

anomaly_score,threshold,whitelist_action,whitelist_factor,original_score,model_version) appear inline under theml_rcf_anomalyblock on the record, and the analyst reads the protocol-specific sidebar section to understand what traffic was flagged.

Alert Payload Example

Abbreviated JavaScript Object Notation for an ML anomaly alert on a DNS event that scored 4.35 against the DNS threshold of 3.0. The underlying event is a standard Suricata DNS record; the alert adds the ml_rcf_anomaly block and the alert_type: "ml_rcf_anomaly" discriminator. A Flow-type alert with whitelist reduction is shown immediately after to illustrate the optional whitelist fields.

xxxxxxxxxx{ "alert_type": "ml_rcf_anomaly", "event_type": "alert", "timestamp": "2026-01-06T15:42:57.265609+0000", "src_ip": "172.16.128.202", "dest_ip": "172.16.133.54", "src_port": 53, "dest_port": 52510, "proto": "UDP", "severity": 3, "ml_rcf_anomaly": { "anomaly_score": 4.34806359852651, "threshold": 3.0, "suricata_event_type": "dns", "event_id": "1235111043704487-dns-1767714177279188372", "timestamp": "2026-01-06T15:42:57.265609+0000", "model_version": "0.1.0", "version_model": "0.1.0", "detector_config": "rcf-dns-default", "original_event": { "event_type": "dns", "dns": { "type": "query", "rrname": "a1b2c3d4e5f6g7h8i9.example.net", "rrtype": "A" } } }, "rule_name": "MLAnomalyDetection", "rule_salience": 5}A Flow-type alert whose original score was reduced by the whitelist carries the three optional whitelist fields alongside the adjusted score:

xxxxxxxxxx{ "alert_type": "ml_rcf_anomaly", "event_type": "alert", "timestamp": "2026-01-06T15:42:57.514901+0000", "src_ip": "172.16.133.25", "dest_ip": "172.16.139.250", "src_port": 63547, "dest_port": 5440, "proto": "TCP", "severity": 3, "ml_rcf_anomaly": { "anomaly_score": 24.106, "threshold": 20.0, "suricata_event_type": "flow", "event_id": "947872398360607-flow-1767714177699397329", "timestamp": "2026-01-06T15:42:57.514901+0000", "original_score": 40.177, "whitelist_action": "reduced", "whitelist_factor": 0.6, "model_version": "0.1.0", "version_model": "0.1.0", "detector_config": "rcf-flow-default" }, "rule_name": "MLAnomalyDetection", "rule_salience": 5}In the second example the whitelist reduced a raw score of 40.177 to 24.106; the post-reduction value still crosses the Flow threshold of 20.0 and the alert emits. On an HTTP anomaly the same block carries suricata_event_type: "http", threshold: 8.0, and typically an original_event.http payload with method, hostname, URL, status code, and length — analysts read the Suricata HTTP sidebar section for the triggering request metadata.

Tuning Considerations

Every RCF tunable is Policy-managed and takes effect without a service restart. The knobs divide into three groups.

Detector capacity and temporal depth.

num_trees(default 20). Number of Random Cut Trees in each detector's forest. More trees stabilize scoring and reduce variance but increase memory and compute per event. Environments with unusually diverse DNS or flow populations may benefit from raising this to 30 or 40; small or highly homogeneous networks can lower it to 10 with minimal accuracy loss.shingle_size(default 3). Size of the sliding window the detector concatenates into each scored point. Larger shingles capture longer temporal patterns at the cost of slower warmup and higher memory per shingle; smaller shingles adapt faster but lose sequence sensitivity. The default balances both.tree_size(default 128). Maximum points stored per tree before first-in-first-out eviction. Controls how much history the model remembers. Raising this slows the model's response to genuine network-behavior changes (a newly-deployed service stays "anomalous" longer); lowering it makes the model more reactive but less stable.

Anomaly sensitivity.

- Per-event-type anomaly threshold. Tuned defaults are DNS 3.0, HTTP 8.0, and Flow 20.0 — validated against production-like traffic with the whitelist enabled to yield a manageable alert volume (roughly 1-2 percent of events). Lowering a threshold surfaces more anomalies at the cost of false positives; raising it tightens the queue at the cost of missing borderline events. Operators should adjust one threshold at a time and observe the resulting queue depth over a warmup window of at least one day.

Whitelist scope.

- Whitelist enable. On by default. Disabling the whitelist roughly quadruples alert volume in typical networks because cluster traffic, service discovery, DNS infrastructure, and multicast all score high without whitelist attenuation. Operators who need to validate what the raw model is seeing may temporarily disable the whitelist, but production operation should leave it on.

- Whitelist patterns. The whitelist accepts CIDR ranges, port lists, HTTP URL wildcards, and complete-exclusion rules for infrastructure populations the network operator knows are not threat surfaces. Updates propagate through the Policy broadcast so operators do not need to redeploy the RCF service.

A fourth consideration is calibration. Because the RCF detector adapts continuously, alert volume and average score drift as the network evolves. Operators should plan periodic precision reviews — the recommended practice is to sample 50 random alerts monthly, label each as a true positive, a false positive, or a warmup artifact, and adjust thresholds when precision drops below 50 percent or rises above 80 percent (too restrictive). The Updates management surface in (Link Removed) exposes per-detector threshold state and last-adjusted timestamps so operators can see at a glance where each detector sits.

The family's main false-positive surface at a cold start is the model warmup period — the first few hundred events each detector sees score higher than they should while the forest is still learning. This dissipates within minutes of live traffic for active event types (DNS and Flow) and within hours for rarer types (HTTP, in networks that are mostly TLS). Operators should disregard alerts in the first shingle-size-times-two events (six events per detector, in practice almost always within the first minute) and not retune thresholds based on warmup behavior alone.

Related Runbook

ML anomaly alerts route through (Link Removed), which covers reading the anomaly score and threshold together, interpreting whitelist adjustments, pivoting to the original event's protocol-specific sidebar section to understand what shape of traffic was flagged, correlating with concurrent behavioral and enrichment alerts on the same source IP, and deciding whether a cluster of ML alerts warrants escalation. Alerts that fire on hosts carrying concurrent Beaconing or Data Exfiltration behavioral detections, or on flows whose destinations appear in the C2 or InSights feeds (the IOC auto-escalation case), enter (Link Removed) directly.